A new cybersecurity in the era of quantum computing

Table of contents:

If in cybersecurity it is essential always to anticipate and prevent, rather than react later, there is even more reason to adopt this approach in light of the advance of qubits. Therefore, it is not just about generating a new cryptographic paradigm based on quantum algorithms when the processors allow it, but developing new protocols or encryption models much earlier, to migrate the most sensitive data and resist the next improved processors. Administrations such as the Chinese and US governments, and also the European Union, are involved. For example, the NITS (US National Institute of Standards and Technology) has been working on an initiative since 2017 to update their standards to include post-quantum cryptography. After several selection phases, it is testing four encryption and three secure signature proposals for identification in financial transactions.

This is why various institutions, from NASA to the Quantum Economic Development Consortium, as well as experts such as the Spanish computer scientist and philosopher Sergio Boixo - Google's chief scientist for quantum computing theory - recommend that governments and companies, especially those that handle critical information, take the matter very seriously, become familiar with the quantum culture to understand the scale of the problem and its immediacy, and prepare to incorporate new encryption models sooner rather than later.

Technological talent will be key if the quantum paradigm implies new knowledge and concepts, unprecedented professions and specialities, and new processes and devices, even during the long phase where it will coexist with conventional computing in hybrid mode. In fact, it is thought that this asset, talent, could be one of the great bottlenecks of the quantum future. So it must be produced in sufficient quantity and quality.

The great challenge of stability

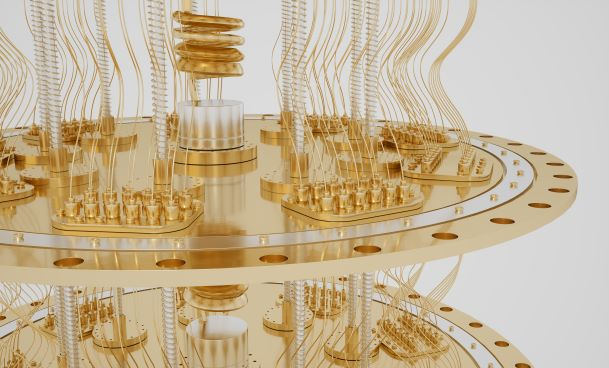

In 1997, the Nobel laureate in Physics, William Daniel Phillips, defined the transition from traditional computing, which we still use, to quantum computing as a leap as big as the one between the abacus and a computer.

Perhaps such an emphatic statement could be qualified, but it does not appear exaggerated in terms of calculation capacity. At the end of 2019, Google presented a 53-qubit (the basic processing unit, like the conventional bit) quantum processor supposedly capable of solving in just over three minutes an operation to which the most powerful supercomputer in the world would have to dedicate 10,000 years. In 2021, Chinese scientists said that their Jiuzhang 2.0 processor could solve in one millisecond a problem that this supercomputer would take about 30 trillion years to solve, i.e. never. In November of last year, IBM broke the record for qubits with a 127-qubit processor, and announced that in 2023 it will debut a 1,121-qubit processor.

This technological competition could compare with others such as the space race, artificial intelligence or nanotechnology, but quantum processing is on another level because it implies a complete change in the computing paradigm, an entirely new way of processing in terms of both quantity and complexity of operations. This is why the physicist Daniel Phillips said what he did about the abacus. And that is why the quantum competition between countries is not only technological but also an arms race, because this new paradigm promises to render the current cybersecurity model developed for computing as we know it obsolete.

Quantum processing is not a panacea, it is not suitable for everything, but it is especially adept at mathematical problems that are intractable for conventional computing, from the behaviour of matter on an atomic scale to meteorological models, prospective financial information or encryption that protects communications, passwords and data. A sufficiently mature quantum computer could easily crack most, if not all, of today's encrypted codes. Left unadapted, cybersecurity would be as defenceless as old mediaeval walls in the face of modern artillery.

However, the processors that we mentioned at the beginning, despite the impressive calculation figures that their developers proclaim, remain a long way from being able to crack current encryption algorithms. They will not only need a lot more qubits, but to deal with or at least reduce their major Achilles heel: their enormous instability. The operability of qubits is very brief, they influence each other, adulterating the result of the calculation and need to be refrigerated at -273 degrees, among other things.

But watch out, this feeling of having a time margin is relatively false. First because, although a stable and operational quantum computer remains decades away, the progressive development of processors towards that goal could suffice to decrypt strategic information, from military secrets and financial transactions to industrial or technological espionage. It could also be applied with a kind of retroactive effect if a criminal organisation, an intelligence service or a government accumulates encrypted information in the hope of having sufficient quantum capacity to decrypt it. When? Some experts think quantum hackers could be capable of cracking the shield of cryptography in a decade or perhaps even sooner.

Looking for new protocols

Arms race

-

Prosegur Security: from physical guarding to hybrid security

April 21, 2026

-

Prosegur Cash: 50 Years Managing value

April 21, 2026